Next up.. big parts of the demo will use movement data: the position and rotation of someone’s head, left hand and right hand while playing Beat Saber.

Details to be revealed over coming posts, but I wanted to have a way to capture and transmit movement data, for the purposes of later analysis (hint: AI / Machine Learning!) and some other purposes (hint: AR!).

This actually came together pretty darn quickly.

The Unity bit

First, the BSIPA framework gives us a handy method, OnActiveSceneChanged. Once again, we check to see if we’ve moved to “GameCore”. If we have, we run:

new GameObject("BeatBrain collector").AddComponent<BeatBrainCollector>();

As you might guess, BeatBrainCollector does all of the work. It starts a little coroutine in Awake(), which waits until BS_Utils.Plugin.LevelData.IsSet becomes true.

From this point, we have access to all kinds of information – much of which I found by using various C# reverse-engineering tools on the Beat Saber source code, as well as plain-old Intellisense.

Serialising State

Right now, the mod creates a file in a subfolder and starts using a BinaryWriter to throw data into it. It writes a whole load of stats first as a header: the player’s Steam name, Steam ID, XR/VR device model, level ID, song name, BPM, difficulty and various gameplay flags.

Then it’s just a case of writing the location (a vector) and rotation (a quarternion) for the head, left hand and right hand objects, every single frame, along with a timestamp indicating how many “ticks” (.NET’s smallest measure of time) have passed since play started.

What next?

To make this a whole load easier, I’m now building an API which will accept this data over HTTP, live as its recorded, and index it for later retrieval. I need a few people to provide data for the various analyses and purposes to work, which is a fun thing to ask.

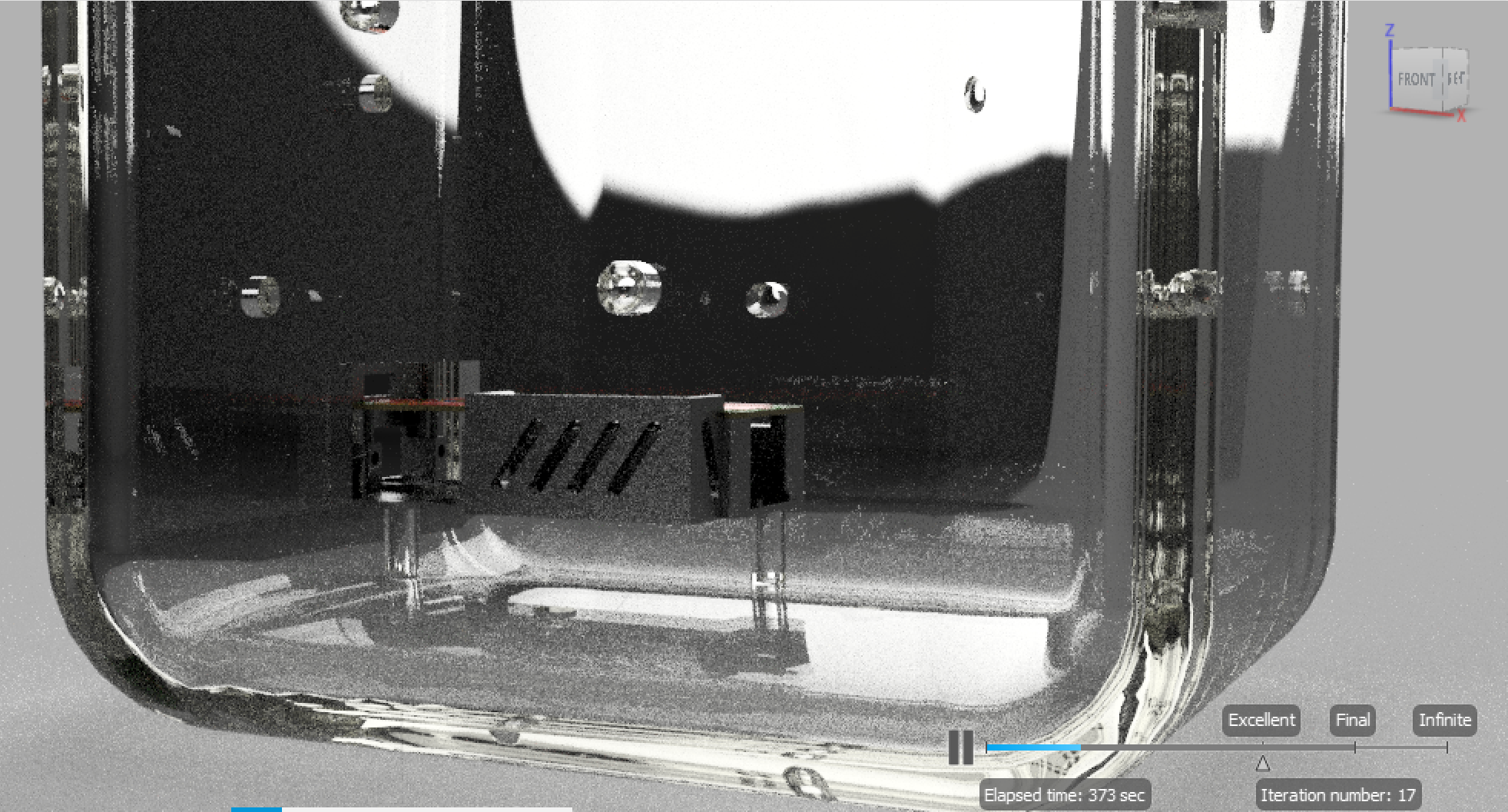

I’ve also put together a quick Unity app which does the reverse: reads the frames from a file and sets the location/rotation of some placeholder assets. Pretty cool! –